As artificial intelligence drives record profits and reshapes entire industries, a quieter story is emerging from the back end of the sector: the growing instability of the contract workers who train, clean and monitor the systems the world is rushing to adopt.

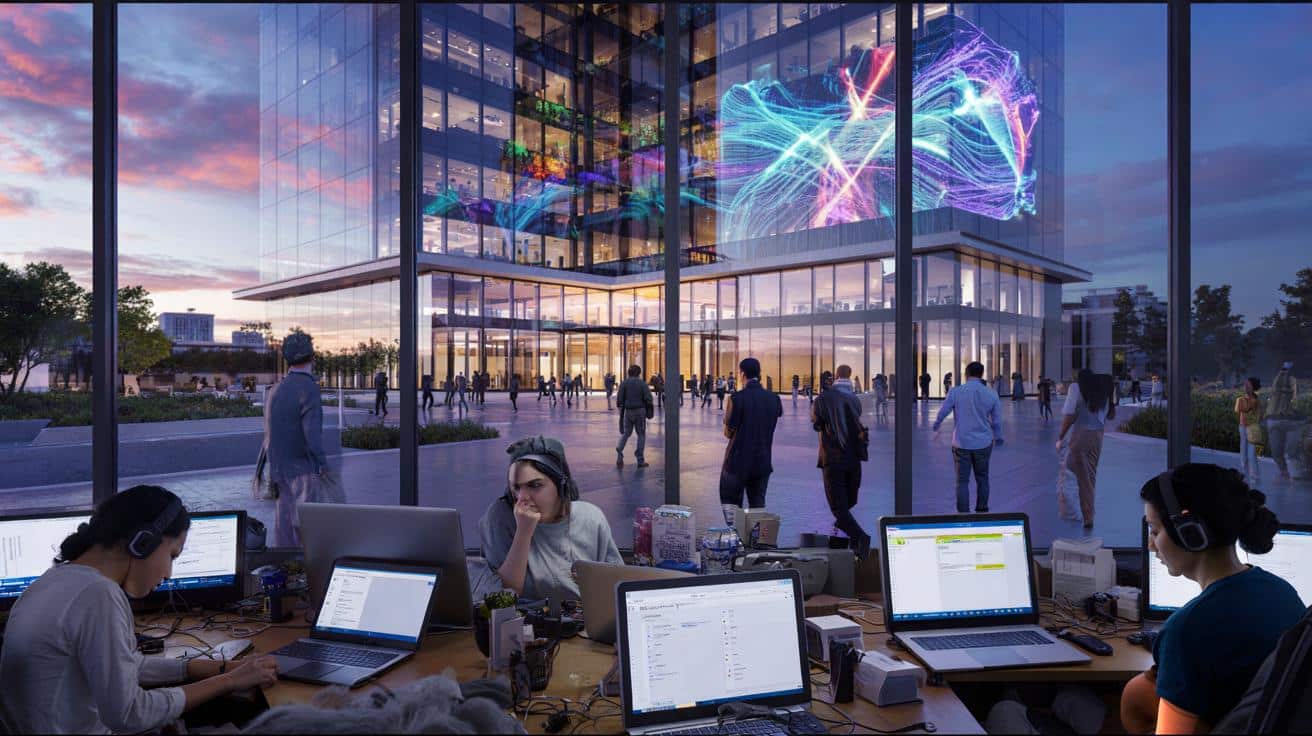

A booming AI economy built on fragile jobs

AI has become the engine room of US growth. According to figures cited by Futurism, companies tied to artificial intelligence accounted for 92% of American GDP growth in the first half of 2025. Investors are euphoric, and tech giants are reorganising their entire strategies around AI models and automation.

At the same time, the broader tech industry looks less like a success story and more like a roller coaster. Amazon slashed around 14,000 roles despite strong results. October brought one of the worst months for tech layoffs since 2003. Profits climb. Headcount falls.

AI is generating billions, yet the people doing much of the day‑to‑day labour behind it often live from gig to gig.

A big part of this contrast comes from a quiet shift: instead of hiring large in‑house teams, companies outsource the work that keeps AI systems functioning. That work includes rating chatbot answers, flagging hate speech, tagging images, rewriting prompts and checking whether outputs match company policies.

These tasks rarely make headlines. They are typically done by remote contractors, scattered across the US and the globe, paid by the hour or by the task, without long‑term guarantees.

Inside the invisible workforce training AI

Most people interacting with AI tools assume the magic comes entirely from code. In reality, human workers intervene at nearly every stage of model development and deployment.

- They sort and label text, images, audio and video so models can learn patterns.

- They rate AI responses on accuracy, tone and safety.

- They write and rewrite prompts to make systems behave “helpfully”.

- They check whether AI content breaks laws or platform rules.

This labour shapes how chatbots answer sensitive questions, which images are removed from platforms, and how recommendation engines rank content. Yet many of the people doing it have little protection from abrupt changes in pay or hours.

Workers report sudden pay cuts, dramatic swings in the volume of available tasks, and “paused” projects that never resume. For people who depend on that income to pay rent or medical bills, such instability can be brutal.

➡️ A peek inside Physical Intelligence, the startup building Silicon Valley’s buzziest robot brains

➡️ Before Trees, Earth Was Ruled By Giant Life Forms That Seem From Another World

➡️ The return of the aircraft carrier Truman is seen as a snub to the Navy in the war of the future

➡️ 2,400-year-old Hercules shrine and elite tombs discovered outside ancient Rome’s walls

➡️ This European country challenges its arms industry with a homegrown rival to the Tomahawk missile

Mercor and the 5,000 people dropped overnight

The case of Mercor, a contractor working with Meta and OpenAI, shows how fragile these arrangements can be. Mercor ran a project called Musen that relied on more than 5,000 workers. Many were told the work would last until the end of the year, according to Business Insider reporting.

Then, with almost no notice, Musen was shut down. Access to tasks vanished. Workers described logging into their dashboards and finding nothing. In practical terms, their jobs evaporated overnight.

The same workers were then invited to a nearly identical project, called Nova, at a lower hourly rate, with little change in the tasks required.

Several workers said the new project involved the same type of content evaluation, just under a different name and five dollars less per hour. The nature of the work stayed constant. The value attached to it did not.

This pattern is not limited to one company. Similar accounts have surfaced across the AI ecosystem: projects that shrink without warning; wage cuts justified by “changing budgets”; teams dissolved and rebuilt with fewer, more specialised workers, while the majority are pushed aside.

Short missions, shifting rates, permanent uncertainty

Instead of stable roles, many AI support workers now face a stream of short contracts. Companies design work as “missions” or “campaigns” that can be turned on and off at will.

When projects end, workers are often encouraged to apply for the next one, sometimes at a different rate, sometimes through a different platform, sometimes not at all. No severance, no notice, no clear path forward.

Those who remain often see their workloads swell. Longer hours, tighter deadlines, and pressure to maintain near‑perfect quality scores become the norm. Lower scores can mean fewer tasks, which means lower pay, which in turn raises anxiety.

Many feel trapped. They accept declining conditions because local job markets offer few alternatives that can be done remotely or flexibly. People caring for children, people with disabilities, or those living in rural areas often have limited options.

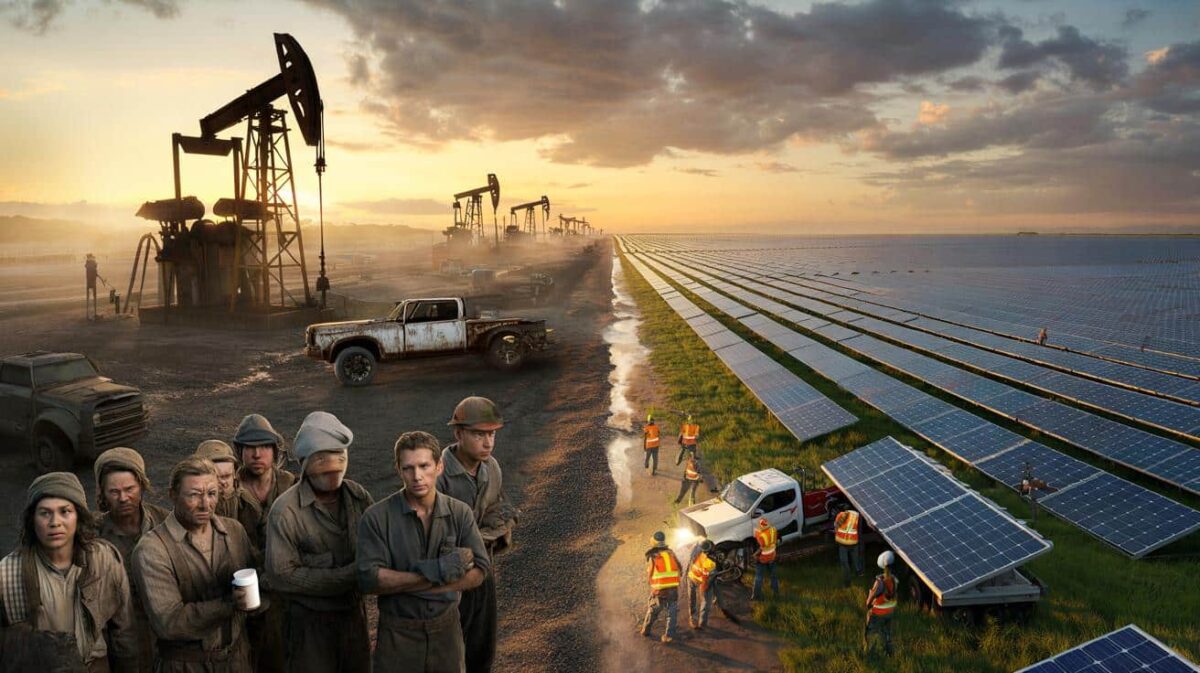

What this shift reveals about our shared future

Tech leaders continue to present AI as a path to better lives. Microsoft CEO Satya Nadella has spoken of an “utopia” in which humans and machines collaborate, as long as people remain in control of the tools. OpenAI’s Sam Altman talks about staggering potential gains alongside serious risks.

On the ground, the tone feels different. Many of the workers buttressing this revolution say they feel disposable. They cycle between platforms, juggle multiple dashboards, and watch pay rates slide while models grow more advanced.

AI was sold as a way to free humans from tedious work, yet that same tedious work has simply been pushed into a precarious layer of the labour market.

The tension raises a blunt question: can AI progress be called inclusive if the people training and safeguarding it struggle with rent, health costs and unstable hours?

Follow the money: who gains from AI’s growth?

| Group | Main benefits today |

|---|---|

| Big tech firms | Higher valuations, new products, major market share in cloud and AI services |

| Investors | Rapid gains from AI‑linked stocks and startups |

| Large enterprises | Cost savings from automation, new data insights |

| AI contract workers | Unstable income, limited benefits, short‑term projects |

While shareholders and executives enjoy the upside, the underlying human infrastructure often stands outside the safety nets that normally come with high‑growth sectors: pensions, healthcare, training budgets, or internal mobility.

Key concepts behind the “AI precariat”

Two terms keep surfacing in debates around this issue: “gig work” and “reinforcement learning”. Both shape daily life for AI workers.

Gig work refers to short‑term, task‑based jobs, usually mediated by platforms. Instead of a salary, people are paid per task or per hour without guarantees of future work. This model, familiar from ride‑hailing or food delivery, now underpins much of the AI labour pipeline.

Reinforcement learning, especially from human feedback (often shortened to RLHF), is a technique where humans rate AI outputs, and the model learns which responses are preferred. The process lets companies steer chatbots away from toxic or misleading answers. The catch: RLHF depends on a huge amount of repetitive, sometimes distressing work done by people reading, assessing and flagging content all day.

Possible paths: regulations, unions and new standards

Several scenarios could unfold over the next few years. One path is a continued race to the bottom, where platforms constantly seek cheaper labour and shorter contracts. That could keep costs low for companies, but push more workers into financial instability.

Another path involves new rules and collective action. Lawmakers in the US and Europe are already debating AI regulation. Labour protections for platform workers may become part of those conversations, covering issues like minimum pay rates, notice periods for project closures, and mental health support for those exposed to harmful content.

Unionisation attempts are also starting to appear among content moderators and data labelers. If such efforts grow, they could change how contracts are written and how sudden changes in pay or workload are handled.

How users and businesses are tied into this system

Ordinary users are not separate from these dynamics. Every time a company rolls out a smarter assistant or a new content filter, it relies on past and present human labour that shaped those systems. Awareness of that reality can influence consumer pressure, corporate branding and political debate.

Businesses deploying AI tools in customer service, healthcare or education also face reputational risks if they benefit from systems built on unstable or harmful working conditions. Some firms are beginning to ask suppliers for clearer information on how data is labelled, moderated and audited, and by whom.

There are practical steps organisations can take: insisting on fair‑work standards in contracts, asking about wage floors for data work, or setting aside budgets to support more stable arrangements instead of always chasing the cheapest option.

The future of AI will not only be defined by algorithms and chips. It will be shaped by whether the people training and supervising these systems gain stable, decent work or remain stuck in a cycle of short contracts and surprise emails that say, without ceremony, that the project is over.